The Avocado Pit (TL;DR)

- 🥑 AI reasoning models have a quirky habit of getting lost in their own thoughts, and that's a safety feature, not a bug.

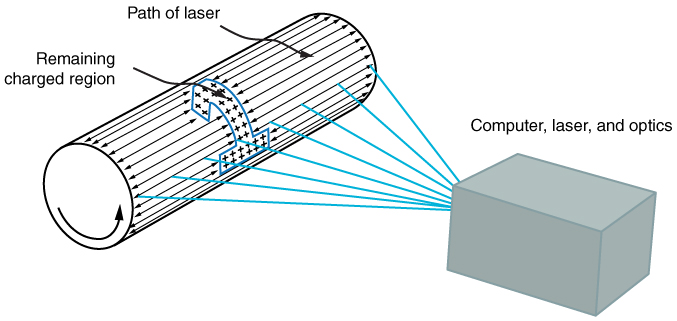

- 🧠 OpenAI's CoT-Control technique aims to monitor and guide these wandering thought patterns.

- 🚦 Lack of control can enhance AI safety by making it more predictable and manageable.

Why It Matters

In a world where AI models are as unpredictable as a cat on catnip, OpenAI's recent discovery offers a refreshing twist: less control might actually be safer. Enter CoT-Control, a technique that helps AI keep track of its own wandering thoughts. It's like giving your GPS a gentle nudge when it starts taking you on scenic detours through the middle of nowhere.

What This Means for You

If you've ever felt that AI was getting a bit too smart for its own good, rejoice! The inability of AI to perfectly control its reasoning chains means developers can better predict and manage its behavior. Thus, you can sleep soundly knowing that AI isn't about to go rogue and attempt to reboot your life—literally or metaphorically.

The Source Code (Summary)

OpenAI recently introduced CoT-Control, a new technique aimed at improving the monitorability of AI reasoning models. These models often struggle with maintaining a coherent chain of thought, which, surprisingly, turns out to be beneficial. By understanding and guiding these meandering thought patterns, developers can enhance AI safety, ensuring the models remain predictable and easier to control.

Fresh Take

In the realm of AI, predictability is king. By allowing these models to have a bit of a mind-wandering session, we're actually making them safer. It's akin to letting a toddler explore a padded room; they may stumble, but at least you know they won't break anything—or themselves. So, the next time someone tells you AI is the future, you can nod knowingly and say, "Yes, and the future is safely unpredictable."

Read the full OpenAI News article → Click here